- STRIDE Threat Modeling using a Threat Modeling Tool

- History of STRIDE Threat Modeling

- Why Do You Need STRIDE Threat Modeling?

- The Types of STRIDE Threats Explained

- Use of Technical Diagrams Such As Data Flow Diagrams (DFDs) Within STRIDE

- STRIDE Threat Types and Their Associated Security Property

- STRIDE Threat Countermeasures, Mitigations, and Security Requirements

- Pros and Cons of STRIDE Threat Modeling

- STRIDE Threat Modeling Tooling

- Conclusion

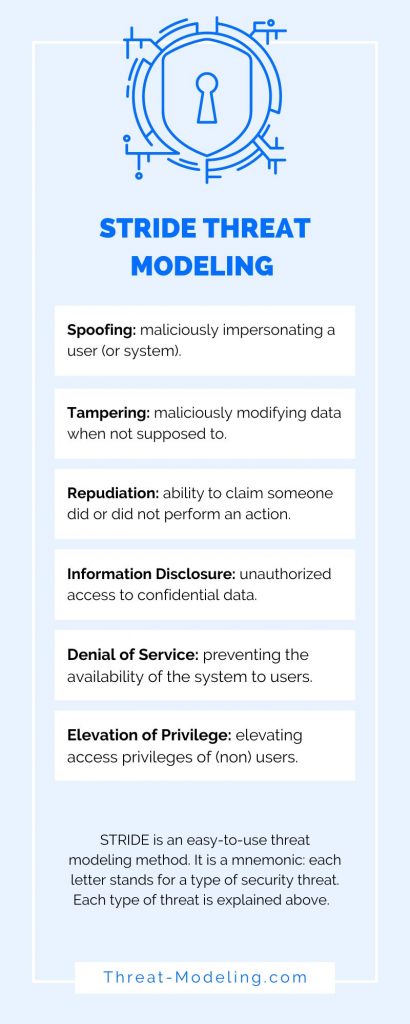

STRIDE threat modeling is a specific kind of threat modeling methodology (or method). It is a mnemonic of six types of security threats. Each letter of STRIDE stands for one of the six types of security threats:

- Spoofing

- Tampering

- Repudiation

- Information Disclosure

- Denial of Service

- Elevation of Privilege

STRIDE threat modeling is helpful because it can tell us ‘what can go wrong’ on the application, system, IT landscape, or business process that we’re (threat) modeling.

We have our own threat modeling framework which is easier to understand and use!

Here’s a video about STRIDE threat modeling:

In case you don’t know what mnemonic means (and at first I didn’t know either): a mnemonic is a learning helper to aid human memory. STRIDE is a mnemonic because each letter stands for something, making it easier to remember.

STRIDE Threat Modeling using a Threat Modeling Tool

Do you want to develop your own STRIDE threat model in my threat modeling tool?

Try our threat modeling tool (it’s free to use – no credit card required).

How it can help:

- Develop a STRIDE threat model based on assessments and diagrams.

- The tool will guide you through the threat modeling process.

- The tool allows you to create beautiful threat modeling diagrams.

If you want more information before you dive in: read my article about the threat modeling tool.

History of STRIDE Threat Modeling

STRIDE threat modeling was first developed and used by two developers Praerit Garg and Loren Kohnfelder at Microsoft. It has been used for many years (and decades) at Microsoft to help secure their software and software development processes.

Adam Shostack, who worked at Microsoft as a Program Manager on the Security Development Lifecycle (SDL) team, and has written a book about threat modeling, used STRIDE extensively at Microsoft.

Why Do You Need STRIDE Threat Modeling?

STRIDE is a simple threat modeling methodology that can be used by a wide variety of people, including those that don’t have a traditional background in information security.

Its simplicity means that a diverse group of team members can all work on STRIDE.

Having many team members with different backgrounds (including non-security and non-technical backgrounds) actually improves the overall quality and outcomes of threat modeling.

This makes it a great threat modeling methodology. If you’d like to know why you should threat model at all, read what is threat modeling.

The Types of STRIDE Threats Explained

STRIDE is based on six common types of threats that we encounter today in applications, systems, IT landscapes, and even business processes. Below is an explanation of the six types of threats.

In a related post, I provide the ultimate list of STRIDE threat examples.

Before reading the definitions of the types of STRIDE threats: remember that these are general explanations and that threats that you may encounter (either within threat modeling sessions or in the wild) may not fit the STRIDE definitions precisely, and that’s OK.

Spoofing

Spoofing is a type of threat whereby an attacker maliciously impersonates (or pretends to be) a different user (or system). You can also use Spoofing more loosely during STRIDE threat modeling to classify threats related to users and access rights.

Examples of Spoofing:

- A user maliciously performs actions as another user by bypassing access control checks. As a result, the malicious user is able to perform actions that should not be possible.

- An attacker maliciously gains the credentials of a registered user in an application and is able to log in as that user. As a result, the attacker is able to perform all actions that the registered user is able to perform.

Tampering

Tampering is a type of threat whereby an attacker maliciously modifies data. You can also use Tampering more loosely during STRIDE threat modeling to classify threats related to the security of data.

Examples of Tampering:

- An attacker is able to access and modify application data due to a lack of access privileges checks in a new feature that was recently developed. The new feature doesn’t properly use the access privileges control method

- An attacker is able to directly access a database that is exposed on the internet. By modifying the data in the database, the application data is directly modified at the source.

Repudiation

Repudiation relates to the ability to prove or disprove that an action or activity was performed by a specific user (or not). Repudiation is thus a type of threat whereby an attacker denies having performed a malicious action.

Examples of Repudiation:

- An attacker performs a transaction in an online banking application. After the transaction is performed, the attacker denies that he or she was the one who performed the transaction. The online banking application is not able to prove or disprove which user performed the transaction.

Information Disclosure

Information Disclosure is a type of threat whereby the attacker gains access to information that should be confidential or secret (and not available to an attacker).

Examples of Information Disclosure:

- An attacker accesses an application that should only show confidential information about the currently logged-in user. However, the attacker is also able to retrieve confidential information about other users.

- An attacker accesses a database through malicious access to the underlying operating system and discloses the confidential database information on the internet.

Denial of Service

Denial of Service is a type of threat whereby an attacker will prevent a system (or application) from working for valid users. This is often achieved by overloading a system with fake requests so that no time or resources remain for legitimate users.

Examples of Denial of Service:

- An attacker sends many thousands of fake requests to an application. As a result, the application is so busy responding to fake requests that no more resources are available to respond to valid requests from valid users.

- In a Distributed Denial of Service attack, an attacker uses a botnet of thousands of computers to send millions of fake requests to an application. As a result, the application is so busy responding to fake requests that no more resources are available to respond to valid requests from valid users.

Elevation of Privilege

Elevation of Privilege is a type of threat whereby an attacker will elevate their current level of access privilege. This can include elevating access privileges where an attacker has no privileges at all (i.e., not a user) or elevating access privileges where an attacker already has ‘some’ privileges (i.e., a basic user).

Examples of Elevation of Privileges:

- An attacker is registered as a normal user in an application but is able to maliciously perform administrative actions that only an administrator should be able to perform.

- An attacker does not have any access to an application at all. However, due to a configuration error the attacker is actually able to access the application.

Use of Technical Diagrams Such As Data Flow Diagrams (DFDs) Within STRIDE

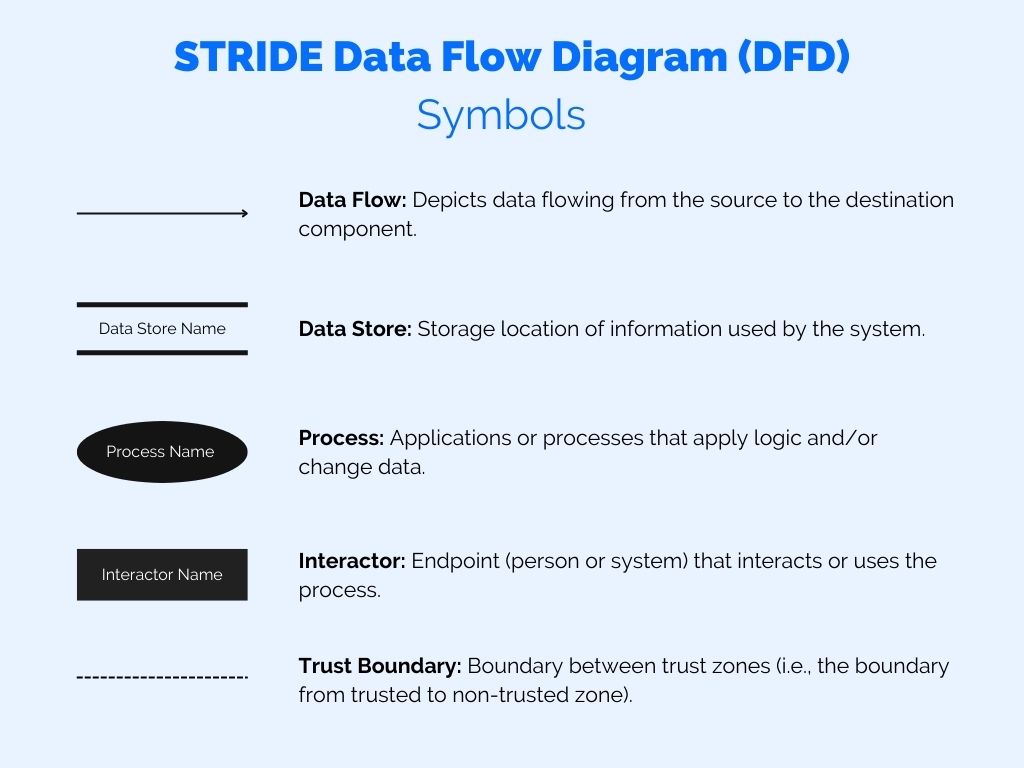

Creating or using a technical diagram such as a Data Flow Diagram (DFD) is an important part of STRIDE threat modeling.

A technical diagram will tell you how your threat modeling target works, how it behaves, how it interacts with key components and actors. It will also communicate these things to your team members and fellow threat modelers.

A technical diagram such as a Data Flow Diagram (DFD) does not have to be an exact representation of your threat model target. It merely needs to highlight the important components, communication flows, actors, etc. In fact, diving too deeply into a technical diagram will distract from focusing on the important components (and potential threats).

STRIDE Threat Types and Their Associated Security Property

I’ve explained the 6 STRIDE threat types above, but I’ll repeat them for clarity: Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, and Elevation of Privileges.

It’s interesting to note that the STRIDE threat types correspond to security properties.

| STRIDE threat type | Security property | Explanation |

|---|---|---|

| Spoofing | Authentication | Spoofing relates to maliciously impersonating a user or a system. Effectively authenticating a user or system at the right time prevents a spoofing attack from being successful. |

| Tampering | Integrity | Tampering relates to maliciously modifying (or creating, updating, deleting) data. Integrity is a security property that defines the importance of information/data being accurate. |

| Repudiation | Non-repudiation | Repudiation relates to claiming that an action does or does not belong to a user, person or system. Non-repudiation is the ability to prove that an action was definitely performed by a user, person or system. |

| Information Disclosure | Confidentiality | Information Disclosure relates to having unauthorized access to confidential data. Confidentiality is a security property that defines the importance of information/data being kept secret. |

| Denial of Service | Availability | Denial of Service relates to overloading a system or service with fake requests so that it cannot respond to legitimate requests effectively or timely. Availability is a security property that defines the importance of being available (at all times, or within a certain time frame). |

| Elevation of Privilege | Authorization | Elevation of Privilege relates to gaining higher acces privileges than intended. Authorization is the security mechanism to determine which rights a user, person or system should have (typically managed via Role Based Access Control (RBAC) or similar). |

Three of the above security properties form the so-called CIA triad, which are Confidentiality, Integrity, and Availability. Many companies use the CIA triad to classify information, IT assets, and applications.

STRIDE Threat Countermeasures, Mitigations, and Security Requirements

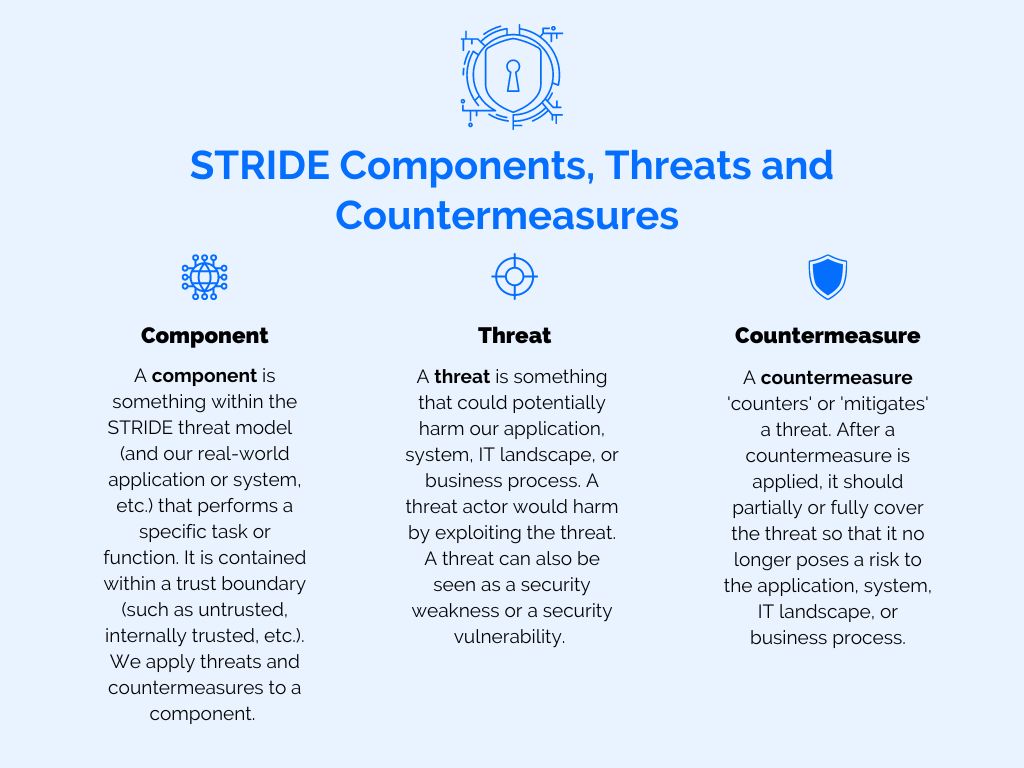

Once threats have been identified using the STRIDE threat modeling method, it is time to define countermeasures to those threats.

Note that countermeasures to threats can also be called security requirements or threat mitigations.

The countermeasures prevent the identified threats from doing harm to our application, system, IT landscape or business process.

Without defining countermeasures, there would be no real benefit to STRIDE or threat modeling because we wouldn’t actually be improving things.

The structure of countermeasures (or mitigations / security requirements) can vary, but will look something like this:

- Component X (which is a component within the scope of our threat modeling)

- Threat X: description of the threat

- Countermeasure 1: description of countermeasure 1

- Countermeasure 2: description of countermeasure 2

- Threat Y: description of the threat

- Countermeasure 1: description of countermeasure 3

- Countermeasure 2: description of countermeasure 4

- Threat X: description of the threat

Some considerations when defining countermeasures:

- Countermeasures do not have to be technology-based, they can also be covered by people or processes too (or even a combination of people, process, and technology).

- You can define multiple countermeasures for one threat if a countermeasure does not fully cover the impact or risk of the threat.

Definitions of STRIDE components, threats and countermeasures:

- STRIDE component: A component is something within the STRIDE threat model (and our real-world application or system, etc.) that performs a specific task or function. It is contained within a trust boundary (such as untrusted, internally trusted, etc.). We apply threats and countermeasures to a component.

- STRIDE threat: A threat is something that could potentially harm our application, system, IT landscape, or business process. A threat actor would harm by exploiting the threat. A threat can also be seen as a security weakness or a security vulnerability.

- STRIDE countermeasure: A countermeasure ‘counters’ or ‘mitigates’ a threat. After a countermeasure is applied, it should partially or fully cover the threat so that it no longer poses a risk to the application, system, IT landscape, or business process.

Pros and Cons of STRIDE Threat Modeling

Pros:

- Easy to understand and easy to teach – which helps to adopt STRIDE among non-security and non-technical team members.

- Quickly identify high-level threats that may impact the system which you are modeling.

- Relatively quick to perform.

Cons:

- May not identify many (potential) detailed threats – meaning that threats are missed.

- Does not include a mechanism to take standard frameworks into account (like NIST CSF, application requirements, etc.).

STRIDE Threat Modeling Tooling

Performing STRIDE threat modeling can be achieved without tooling. However, it’s far easier to do so with a threat modeling tool.

Advantages of using STRIDE threat modeling tooling:

- Following a specific and enforced STRIDE threat modeling process.

- Easier registration of results (i.e. threats and security requirements).

- Easier learning for new STRIDE threat modelers.

- Getting automated input (for known threats and known threat intelligence sources).

- Better reporting (especially for enterprise environments).

- And more (see below for details).

Conclusion

STRIDE threat modeling is one of the most well-known threat modeling methods and also one of the easiest to understand.

The fact that it’s easy to understand helps a diverse team to easily understand the method and how to apply it to their application, system, IT landscape, or business process.